1 month and $4,016.42 later

Claude Code after 1 month and $4,016.42

July 27, 2025

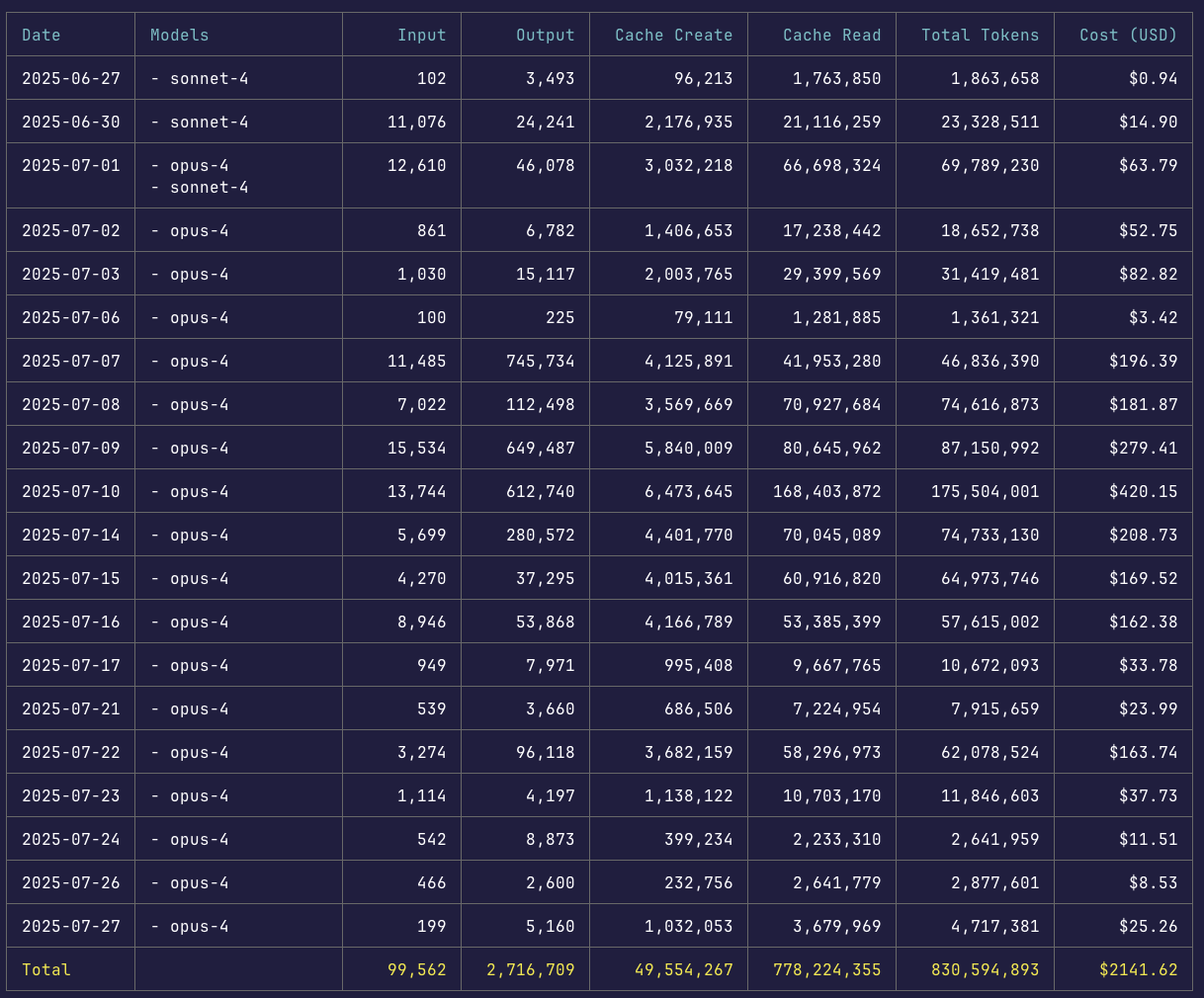

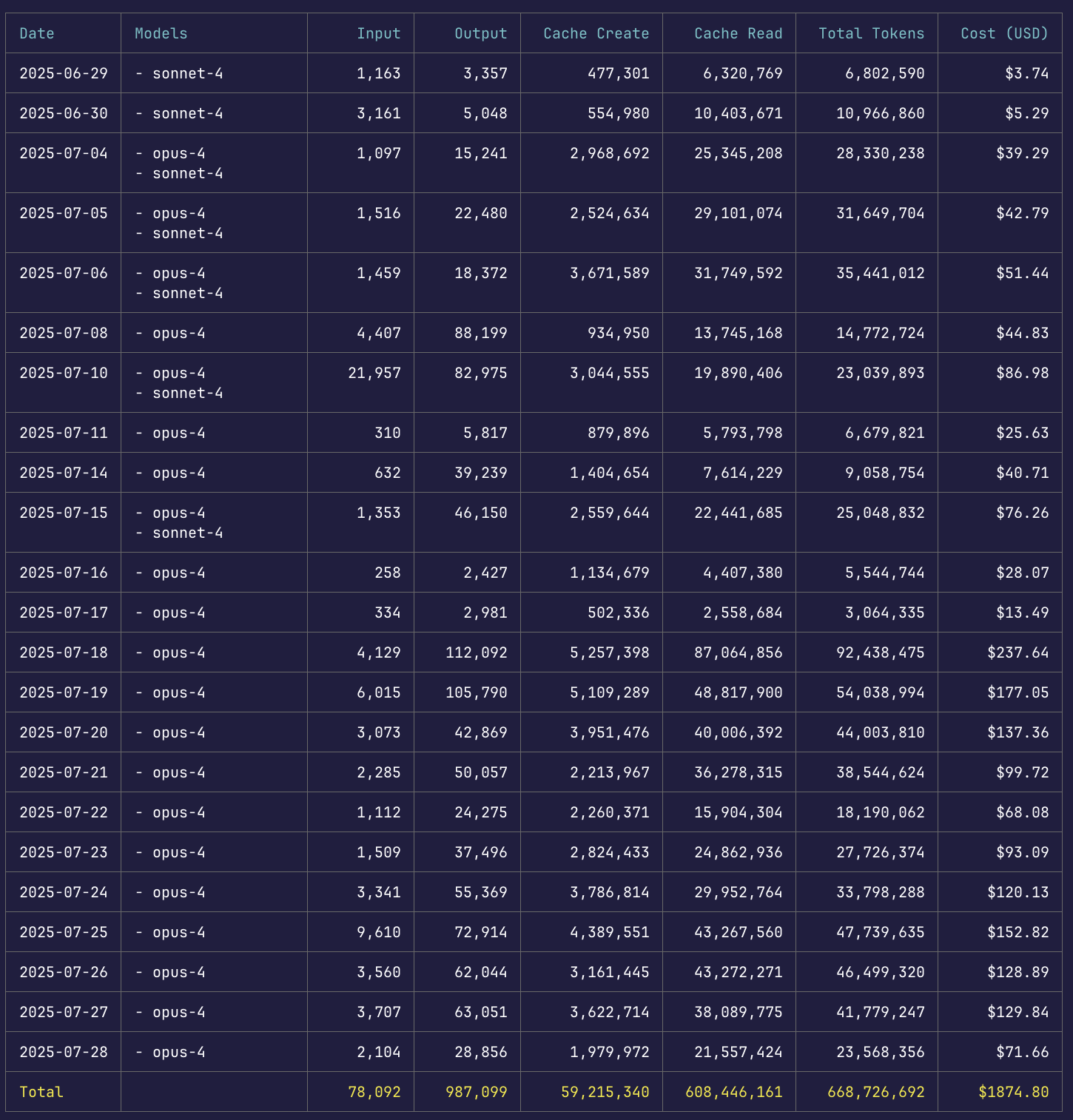

I spent $4,016.42 in API calls on Claude Code in one month, and thanks to the Max plan I only paid $200.

Here's what I learned.

What it is

A terminal tool that reads your files, writes code, and runs commands, with no IDE or UI layer at all. You talk to the model and it acts on your codebase directly.

It works like a developer would: greps for relevant files, spins up subagents to gather context, then brings it all back to the main thread. When I asked it to add error handling to an API endpoint, it found my existing error handler, matched the format, used the right status codes, and updated the docs.

What it's good at

Migrating auth from sessions to JWTs with backward compatibility is the kind of thing I'd budget three days for. Claude Code did it in four hours. It read 47 files, created a migration plan, waited for approval, then updated 23 endpoints, wrote a compatibility layer, and ran the tests.

It really shines when it can see the full picture. Give it a CLAUDE.md file with your conventions and it matches your style from the start.

Never run more than three agents

I deployed 7 subagents to write test cases for a legacy project, and the results were a mess. Half the tests followed one format while the other half went in a completely different direction.

The problem is implicit decisions. Each agent makes small choices about coding style, variable naming, and architectural patterns that clash when you combine them. Three agents is the max before things start falling apart.

Context beats prompts

From Walden Yan's research on context engineering:

The telephone game kills agent systems. Every handoff loses context, so "implement user authentication with OAuth" degrades a little more with each pass.

Context beats clever prompting. A mediocre prompt with complete context beats a perfect prompt with partial context every time.

Linear beats parallel. One agent that's aware of all previous decisions produces better results than three agents with partial context. If you go parallel, limit it to read-only tasks.

For parallel work, try the conductor pattern: one agent plans, a second reviews, and the first implements. Reset context with /clear between distinct tasks.

Where it breaks

It invents things. Last month it conjured @/lib/quantum-cache with detailed explanations of "advanced caching strategies," so always verify your imports.

Scope creep. A bug fix can balloon into a refactor if you're not careful. Constrain it explicitly: "Fix ONLY the error handling in lines 42-47. Make no other changes."

The Max plan has limits. Batch related tasks together so you get more out of each session, like five UI tweaks in one go.

Should you use it?

Six months ago, AI coding tools were toys. Now Claude Code handles production systems, and that gap closed faster than anyone expected.

Start with a CLAUDE.md describing your project, try the explore-plan-code-commit workflow, and see what happens.

July 27th, 2025